|

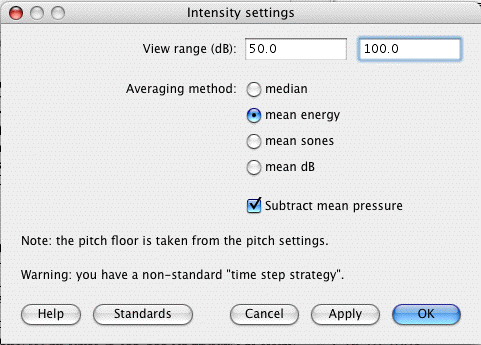

This finding implies that context encoding is highly automatic for native listeners. Moreover, focal attention is not a prerequisite for extracting contextual cues from speech and nonspeech during perceptual normalization. Therefore, domain-specific processing of speech could be the most likely cause of the unequal context effect. the data respectively and Xij is the normalize data. This finding suggests that speech and nonspeech sounds are partly processed by domain-specific mechanisms and that information from the same domain can be integrated more effectively than that from different domains. The pitch and intensity features were extracted using Praat tool. The results reveal a prominent congruency effect-target sounds tend to be identified more accurately when embedded in contexts of the same nature (speech/nonspeech). To shed light on this issue, the present study compares the perception of lexical tones and their nonlinguistic counterparts under specific contextual (speech, nonspeech) and attentional (with/without focal attention) conditions. Some potential factors which may contribute to these unequal effects have been proposed but, thus far, their plausibility remains unclear. Speech contexts are usually more effective in improving lexical tone identification than nonspeech contexts matched in pitch information. While the pitch and duration of a sound can be modified with the ManipulationEditor(see Intro 8.1. However, not all contexts are equally beneficial. You can modify the intensity contour of an existing sound.

Context is indispensable for accurate tone perception, especially when the target tone system is as complex as that of Cantonese.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed